Update 2:

Further adjustments, tests included allotting even fewer cores, disabling Hyper-Threading, and discontinuing CPU folding altogether (i.e., removing the slot).

However, the one change to make a significant, about 5 million PPD higher average, and consistent difference over a few nights of monitoring was upgrading to Windows 11. I didn’t change or reinstall any drivers or programs, simply did the upgrade from Windows 10 to 11 via Windows Update.

Update:

After some additional research and monitoring, I decided to test if the CPU was a bottleneck by adjusting the amount of virtual cores/threads the CPU slot was allowed to occupy. I’ve set it at 8, 10, or 12 in the past few runs. At first, the change appeared to make a huge difference (e.g., averaged ~35M PPD for a couple of projects). Unfortunately, the uplift was seemingly a coincidence — maybe?

Original:

For the past couple of years, I’ve been running Folding@Home overnight (off-peak electricity hours) and only during the winter months. Originally, the config included dual GPUs of the RTX 3080 family. I recently upgrade to RTX 40 series and somewhat disappointed (as well as somewhat baffled) at the performance with Folding@Home / F@H. While not an essential task, I am extremely curious as to why newer generation GPUs are performing poorly… If that’s even what’s happening.

On every WU, the performance is noted as significant, anywhere from five to fifty-percent, below the average. As a note, the 3080 and 3080 Ti I had here at least average if not often above.

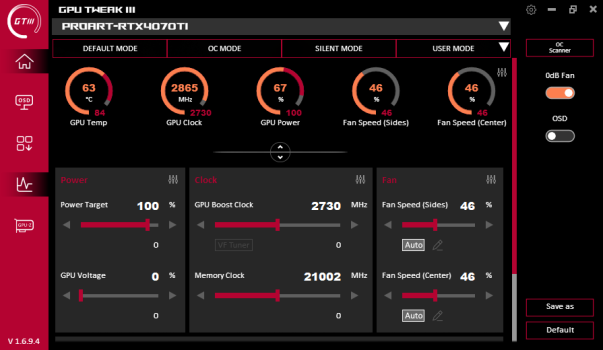

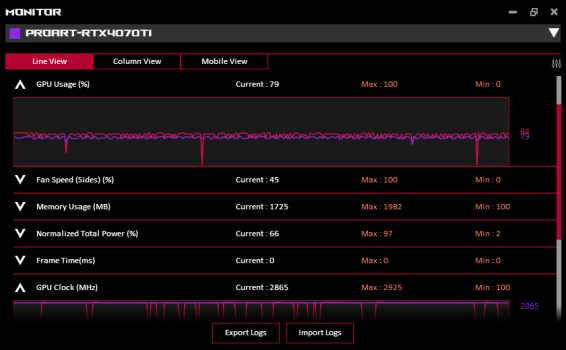

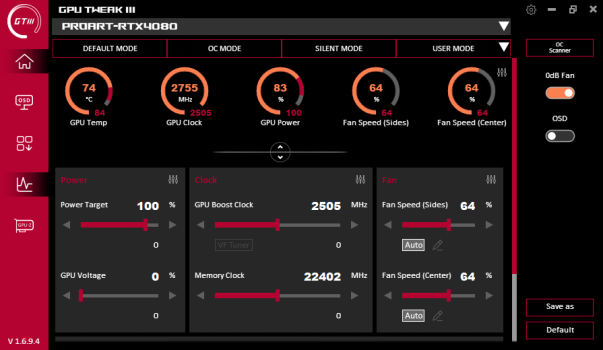

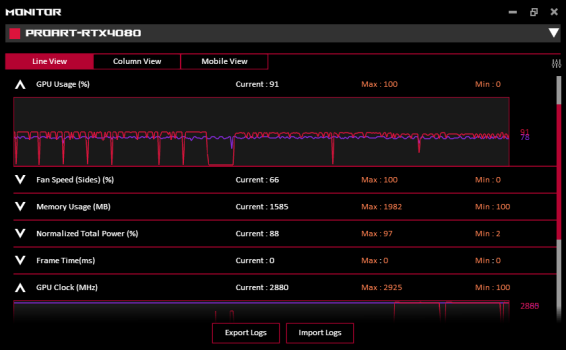

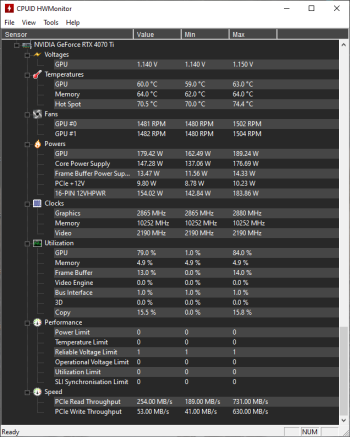

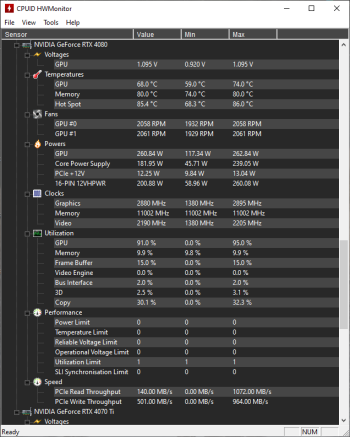

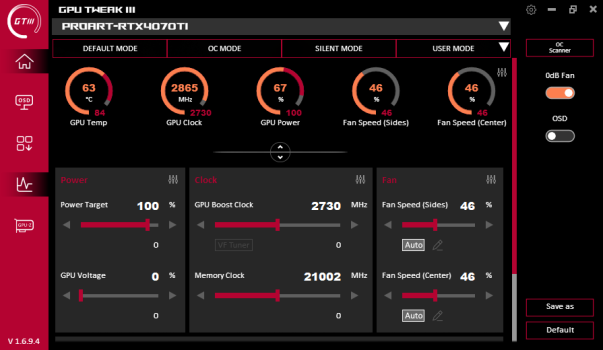

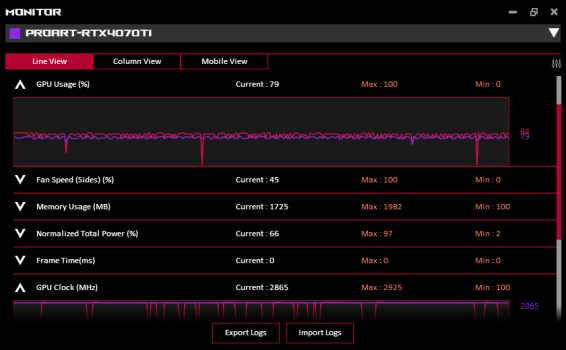

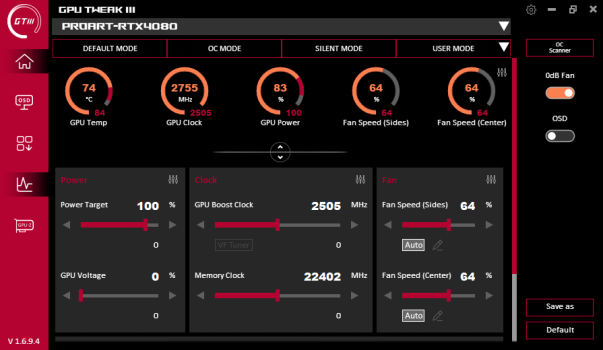

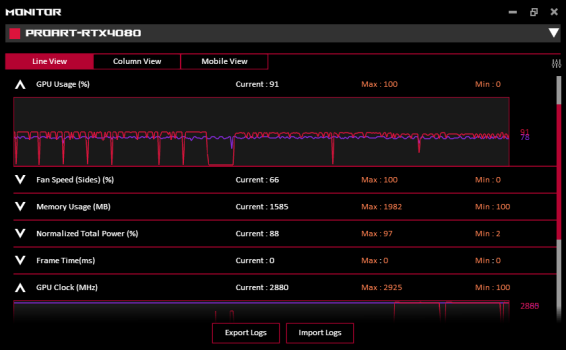

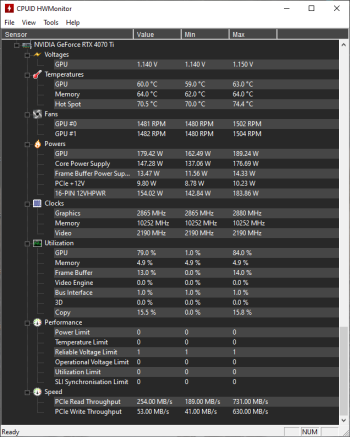

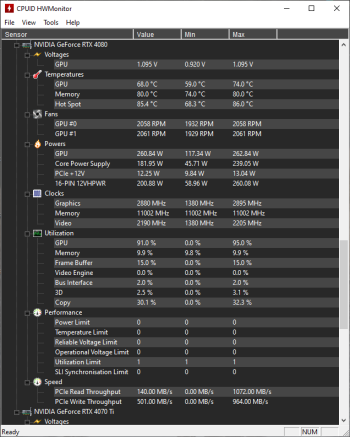

I’ve done a clean reinstall of the latest drivers (v546.01), run the graphics cards through a few benchmarks, and don’t see much amiss in the performance values/details. For examples, the GPU clock speeds are higher than Nvidia advertised, some thermals are on the high side but definitely below the safe threshold.

In HWMonitor, I have noticed frequent run-ins with Reliable Voltage Limit and Utilization Limit. However, I can’t figure out exactly what those triggers are about, nor if they are something I can adjust to overcome.

And quite frankly, I hate not understanding.

Any insight or suggestions?

Further adjustments, tests included allotting even fewer cores, disabling Hyper-Threading, and discontinuing CPU folding altogether (i.e., removing the slot).

However, the one change to make a significant, about 5 million PPD higher average, and consistent difference over a few nights of monitoring was upgrading to Windows 11. I didn’t change or reinstall any drivers or programs, simply did the upgrade from Windows 10 to 11 via Windows Update.

Update:

After some additional research and monitoring, I decided to test if the CPU was a bottleneck by adjusting the amount of virtual cores/threads the CPU slot was allowed to occupy. I’ve set it at 8, 10, or 12 in the past few runs. At first, the change appeared to make a huge difference (e.g., averaged ~35M PPD for a couple of projects). Unfortunately, the uplift was seemingly a coincidence — maybe?

Original:

For the past couple of years, I’ve been running Folding@Home overnight (off-peak electricity hours) and only during the winter months. Originally, the config included dual GPUs of the RTX 3080 family. I recently upgrade to RTX 40 series and somewhat disappointed (as well as somewhat baffled) at the performance with Folding@Home / F@H. While not an essential task, I am extremely curious as to why newer generation GPUs are performing poorly… If that’s even what’s happening.

On every WU, the performance is noted as significant, anywhere from five to fifty-percent, below the average. As a note, the 3080 and 3080 Ti I had here at least average if not often above.

I’ve done a clean reinstall of the latest drivers (v546.01), run the graphics cards through a few benchmarks, and don’t see much amiss in the performance values/details. For examples, the GPU clock speeds are higher than Nvidia advertised, some thermals are on the high side but definitely below the safe threshold.

In HWMonitor, I have noticed frequent run-ins with Reliable Voltage Limit and Utilization Limit. However, I can’t figure out exactly what those triggers are about, nor if they are something I can adjust to overcome.

And quite frankly, I hate not understanding.

Any insight or suggestions?

Last edited: