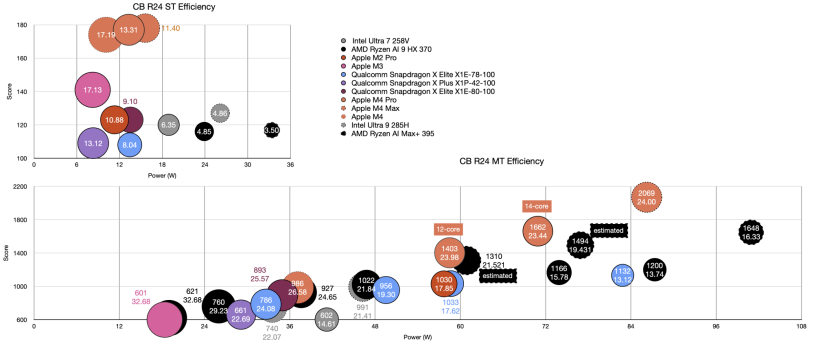

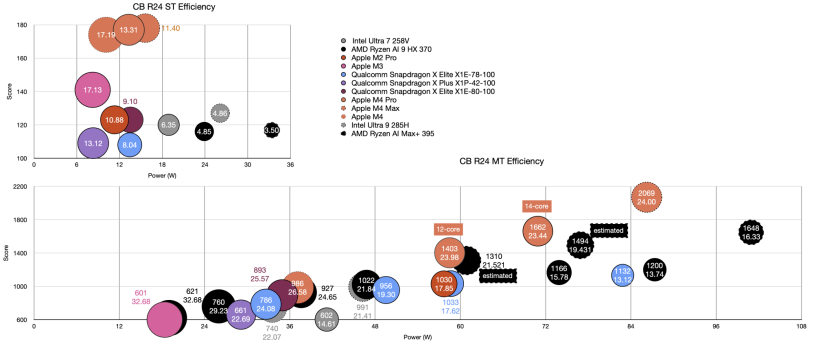

My Strix Halo vs M4 CPU analysis (click to expand) as always using

NBC data, subtracting out idle power:

Things are getting a bit scrunched up, haven't had time to prune the data points (unfortunately Numbers isn't great here, change the order or remove a data point and the entire graph's style has to be redone).

With respect to the Strix Halo in Asus ROG Flow (AI Max+ 395), I expected higher power/lower efficiency for the ST but I thought there would be better performance than the Strix Point. Instead it is just same performance with much worse efficiency - not totally unexpected given the larger SOC design, but this is substantially worse. It almost uses as much power in ST as the base M4 does in multicore. Also, this is with the idle power removed and the idle power here is substantial - 11.2W and this all due to the SOC, no dGPU to blame and it is a <14" laptop/tablet hybrid. With only one data point I don't know if that is a feature of the Halo's design or Asus' implementation.

Similar story with multithreaded efficiency. The 14-core M4 Pro is over 40% more efficient (side note: in this analysis

I use the 14-core M4 Pro from the 14" Pro which had a slightly lower performance and slightly better efficiency than the 16" device reported in the Halo article) for similar performance. The Halo is getting 41% more performance than the Strix Point for roughly the same power increase when the HX 370 nets a score of 1166. Halo's MT efficiency improves dramatically as power draw lowers. Unfortunately NBC did not measure wall power at each TDP. However, while TDP doesn't not correspond well to wall power in an absolute sense,

the Strix Point analysis where they did indeed measure both seems to confirm that relative TDP values are proportional to the wall power. So, assuming the same is true for the Halo, I can still use the TDP values to estimate wall power assuming 70W TDP for the default state wall power was measured in. The Halo is well off the 14-core's efficiency at similar power and doesn't quite reach the 12-core M4 Pro's efficiency, but is much closer, only about 11% lower. At first blush, this is not a bad result for AMD. Strix Point is only about 10% less efficient than the base M4 while the Halo is about 11% lower than the 12-core M4 Pro. However, it should be pointed out that these chips are massive compared to the Apple ones I'm comparing them to and CB R24 is a nearly embarrassingly parallel MT test

where SMT2 yields around a 25% increase in performance. Strix Point therefore essentially has 12 P-cores (4 Zen 5 AVX 256bit cores + 8 Zen 5c cores) and 15 Performance threads equivalent while the base M4 has only 4 P-cores + 6 E-cores for maybe 6 performance threads equivalent. Meanwhile, Halo has 16 full Zen 5 AVX-512 cores for 20 performance cores equivalent as compared to the 12-core M4 Pro which has 8 P-cores and 4 E-cores for roughly 9.3 performance threads equivalent. Things get even more stark when considering die size.

Based on annotated die shots, I've estimated that Strix Point CPU is roughly the same size in mm^2 as the M4 Max! (the M4 Max CPU size is estimated based on multiplying the appropriate component sizes as measured by the M4 die shot). Meanwhile the Halo is nearly double that size.

Here are my estimates:

| mm^2 |

| Apple M4 | 27.0 |

| Apple M4 12-core Pro | 46.1 |

| Apple M4 14-core Pro | 52.2 |

| Apple M4 Max | 58.2 |

| Strix Point | 58.1 |

| Strix Halo | 109.5 |

A small note about what it is included. SLC is NOT included for any SOC above, neither are Apple's E/P-core AMX units (adds about 10%). It includes AMD's L1-L3 and Apple's L1 and L2. Of course there is a major caveat here. Apple's chips are manufactured on TSMC's N3E while AMD's are manufactured on N4P (maybe N4X for the Halo, more on that below). Quantifying how much that matters, how many transistors each chip uses, is nearly impossible. We can try to use references (

1 and

2) to estimate the density difference, but that is so dependent on the proportion of SRAM to logic the even knowing transistor count of the whole die doesn't tell us much. SRAM isn't expected to change in density, but logic did substantially (although even things annotated as cache can contain logic, as Apple's 16MB cache is 30% smaller than AMD's 16MB cache on the Halo). By my estimations, Apple's chips are anywhere from 15-40% more dense than AMD's depending on what values you want to take, with I believe TSMC themselves giving a rough decrease of 30% of N3E compared to N5 for their unknown reference chip (and N4P/X being <6% smaller than N5 since SRAM didn't change at all and logic decreased by 6% -

although Granite Ridge is supposedly quite a bit denser than its N5 predecessor so 🤷♂️).

So how does Strix Halo compare to AMD's Granite Ridge (desktop Ryzen)? My guess is they are actually manufactured on different processes. N4X and N4P are nearly identical and so if you look at die shots the two CPUs are exactly the same, but

the two dies outside of the CPU looks ever so slightly different. Further what N4X adds to N4P are a couple of extra transistor types for both extra low power and high power but more leakage. Look at the desktop Ryzen 9950X,

it can achieve a score of 2254 in CB R24. According to NBC,

it gets 7.18 pts/W, which, subtracting out 100W idle power since it was connected to a 4090 at the time, means it used 213W above idle to achieve that score. Meanwhile Strix Halo's performance is already tapering at much lower wattages - going from 101W wall power to 117W wall power (16%) only results in 5% extra performance (117W off chart). We saw Strix Point behave the same way, eventually maxing out at just over 1200pts. Given how far 2254 and 1731 are it seems difficult to see how Halo could reach that even with double the power with such small gains (which will continue to get smaller as power increases). Now, this was measured in the tablet hybrid form factor and there is dispute online whether Granite Ridge itself is N4X or N4P, so this isn't a sure thing. But it seems to me, with the slight differences in die and the seemingly different performance characteristics, it looks reasonable to me that

something is different between the desktop Ryzen and Strix Halo chips where the latter is probably geared towards performance at lower power, while the former is geared towards absolute performance.

Bottom line: Apple has a huge advantage in terms of performance/Watt AND performance/Area - perhaps not hugely surprising since the amount of power used should be proportional to the amount of silicon die area turned on, but still AMD needs far bigger CPUs to compete with Apple. This probably translates increased profit margins for Apple (with the caveat that Apple probably spends a lot more per die being first on the most advanced node), especially since they package their chips themselves without an OEM middle man. This ability to build smaller, but still performant CPUs, is also probably one factor that allows them to more cost effectively build something like the M4 Max (and Ultra or whatever Hidra ends up being) whereas the Strix Halo, for all its size is closer to a M4 Pro competitor. It isn't clear beyond consoles if there is an appetite in the PC space for larger SOCs and indeed the Strix Halo itself is as of yet unproven in the marketplace.